Best AI Code Security Tools in 2026: How AI Is Changing Application Security

Case Studies

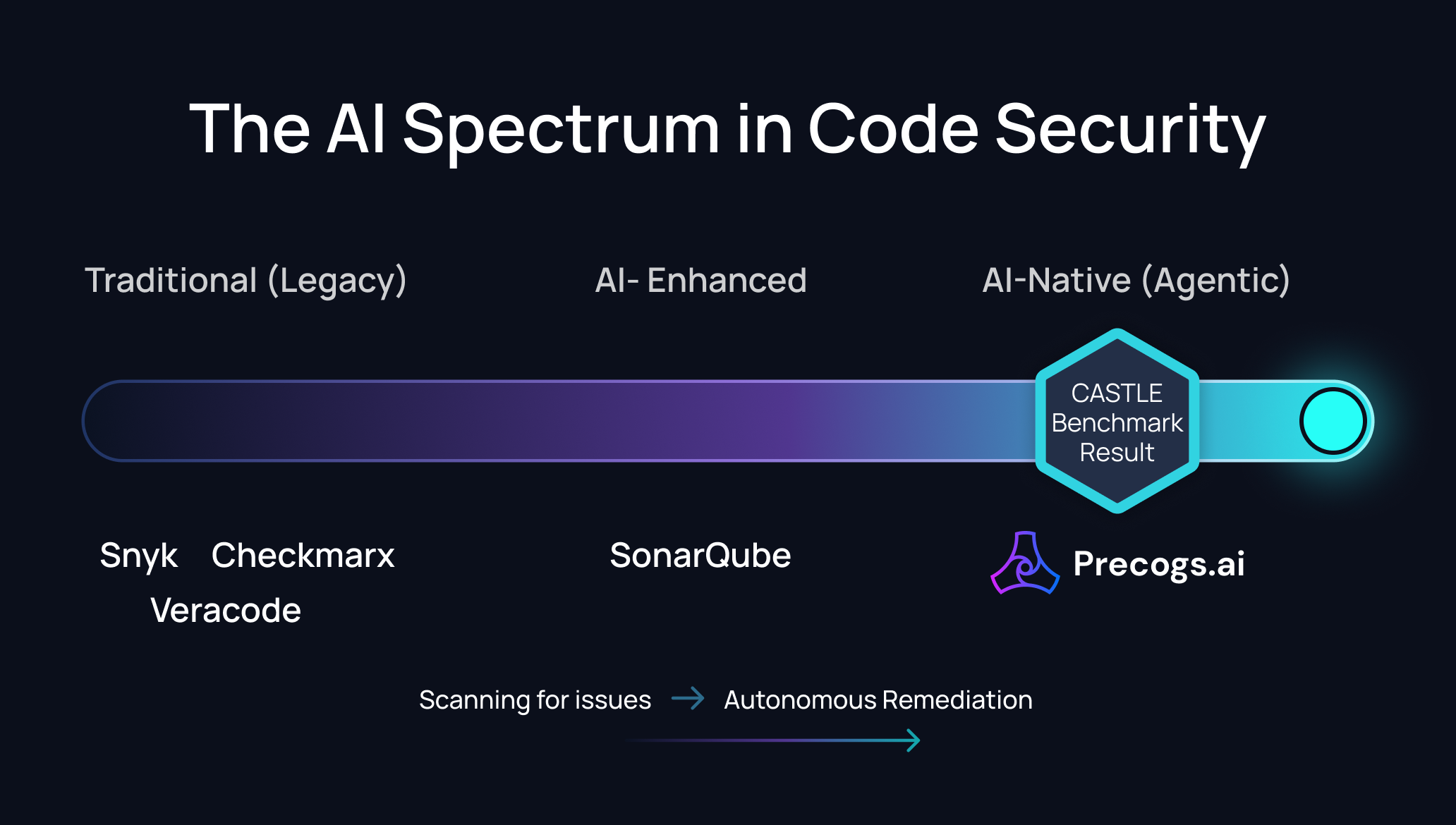

The AI Spectrum in Code Security

Understanding the Difference

| Level | What It Means | Examples |

|---|---|---|

| Rule-Based | Predefined patterns match known vulnerability signatures. Can't find what rules don't cover. | SonarQube, legacy SAST |

| Pattern-Matching | Flexible pattern syntax for code matching. Powerful but requires human-written rules. | Semgrep |

| AI-Assisted | AI helps triage and prioritise. Core detection is still traditional. | Snyk Code |

| AI-Augmented | AI enhances existing detection with suggestions and recommendations. | Checkmarx AI Security Champion |

| AI-Generated Fixes | AI writes remediation patches. Detection may still be traditional. | Veracode Fix |

| AI-Native (Agentic) | AI IS the detection engine. Multi-model ensemble detects, triages, fixes, maps to compliance, and delivers PRs autonomously. Includes data protection (PII, Pre-LLM Sanitization). | Precogs AI |

Tool Comparison: AI Capabilities

| AI Feature | Precogs AI | Snyk | Checkmarx | Veracode | Semgrep | SonarQube |

|---|---|---|---|---|---|---|

| Agentic AI Workflow | ✅ Detect → triage → fix → PR → integrate | ❌ | ❌ | ⚠️ Fix only | ❌ | ❌ |

| AI Detection Engine | ✅ Multi-model ensemble | ⚠️ ML-assisted | ⚠️ AI query builder | ❌ Traditional | ❌ Patterns | ❌ Rules |

| Zero-Day Detection | ✅ Novel patterns via AI | ⚠️ Database-driven | ⚠️ Limited | ⚠️ Limited | ❌ Rule-only | ❌ Rule-only |

| AI Fix Generation | ✅ Full PR fixes | ⚠️ Limited | ⚠️ Suggestions | ✅ Veracode Fix | ⚠️ Limited | ⚠️ AI CodeFix |

| PII Detection | ✅ 99.2% precision | ❌ | ❌ | ❌ | ❌ | ❌ |

| Pre-LLM Sanitization | ✅ Strips PII/secrets/IP before AI | ❌ | ❌ | ❌ | ❌ | ❌ |

| Context-Aware Analysis | ✅ Cross-file data flow | ⚠️ Partial | ⚠️ Partial | ⚠️ Partial | ⚠️ Pro rules | ❌ |

| AI False Positive Reduction | ✅ 98% precision | ⚠️ Improves over time | ⚠️ AI triage | ❌ Manual | ⚠️ AI triage | ❌ |

| Real-Time CWE Mapping | ✅ With exploitability context | ✅ | ✅ | ✅ | ⚠️ | ⚠️ |

| Compliance Automation | ✅ SOC 2, HIPAA, ISO 21434 | ⚠️ OWASP only | ✅ Strong dashboards | ⚠️ Limited | ✅ Policy mgmt | ❌ |

What Makes Precogs AI "AI-Native" and "Agentic"

1. Agentic AI Workflow — Beyond Find

Unlike tools that bolt AI onto existing detection engines, Precogs was built with AI as the core. But the real difference is what happens AFTER detection. Precogs runs an agentic workflow: detect → triage by real-world exploitability → generate code fix → deliver as PR → map to compliance standards → learn from outcomes. This is end-to-end autonomous security, not "AI that helps you triage."

2. Pre-LLM Sanitization — Protecting Data in the AI Age

As AI-powered security tools send code to LLMs for analysis, a new risk emerges: sensitive data reaching third-party AI infrastructure. Precogs includes Pre-LLM Sanitization — stripping PII, secrets, and intellectual property from code before ANY AI model processes it. No other security tool offers this protection.

3. Advanced PII & Secrets — What Other SAST Tools Miss

Traditional SAST finds code vulnerabilities. But what about the customer data hardcoded in your test files? The API keys in your config? The NHS numbers in your logs? Precogs includes advanced PII detection (99.2% precision, 30+ data types) and multi-layer secrets scanning (regex + ML NER + Shannon entropy) as standard — not an add-on.

4. Context-Aware Analysis + Zero-Day Detection

Precogs understands code across files, functions, and dependencies. A SQL query constructed in File A, passed through File B, and executed in File C? Precogs traces the full path. And because AI IS the detection engine (not rules), it catches novel vulnerability patterns — including zero-days — that no rule has been written for.

5. Real-Time Compliance Integration

Every finding is automatically mapped to relevant frameworks — OWASP Top 10, CWE, SOC 2, HIPAA, ISO 21434, UN R155 — with severity scoring and exploitability context. Compliance reports are generated automatically, not manually assembled.

6. Continuous Learning

The AI improves with every scan — learning from new vulnerability patterns, evolving code practices, and emerging threat intelligence. Rule-based tools only improve when someone writes a new rule.

Evaluation Criteria for AI Code Security Tools

Guide for readers evaluating tools. Design as a checklist or scorecard.

| Question to Ask | Why It Matters |

|---|---|

| Does the tool run an agentic workflow (detect → fix → PR) or just detect? | End-to-end automation vs manual triage+fix is a 10x productivity difference |

| Does it include PII detection and Pre-LLM Sanitization? | As AI tools process more code, data protection during analysis is critical |

| Can the tool detect vulnerabilities not covered by pre-written rules? | This separates AI-native from pattern-matching — zero-day coverage |

| What is the false positive rate? | High FP rates (10-35%) create alert fatigue and slow development |

| Does it map findings to compliance frameworks automatically? | Manual compliance mapping wastes security team time |

| Can it explain WHY something is a vulnerability with CWE context? | AI should provide context, not just flags |

Frequently Asked Questions

1. What is AI-native code security?

AI-native code security means the AI IS the detection engine — not an add-on. The AI models are purpose-trained to understand code structure, detect vulnerability patterns, and generate fixes. This differs from "AI-assisted" tools that use AI for triage but rely on traditional detection.

2. What is an Agentic AI workflow in code security?

An agentic AI workflow means the AI autonomously handles the full security cycle: detect vulnerabilities → triage by severity → generate code fixes → deliver as pull requests → map to compliance → learn from outcomes. This eliminates manual triage and remediation, reducing mean-time-to-fix from days to minutes.

3. What is Pre-LLM Sanitization?

Pre-LLM Sanitization strips PII, secrets, and intellectual property from code before it reaches any AI or LLM model for analysis. This prevents sensitive data from leaking to third-party AI infrastructure. Currently, only Precogs AI offers this as a built-in feature.

4. Which AI code security tool is most accurate?

Precogs AI reports 98% precision (~2% false positive rate) via multi-model AI ensemble. Traditional tools typically report 10-35% false positive rates.

5. Can AI SAST tools detect zero-day vulnerabilities?

AI-native tools like Precogs can detect novel vulnerability patterns not previously catalogued — similar to how a researcher spots suspicious code. Pattern-matching tools (Semgrep, SonarQube) only detect what existing rules cover.

6. Should I use AI code security tools for AI-generated code?

Yes. Code generated by AI assistants (Copilot, ChatGPT, Claude) often contains subtle vulnerabilities. AI-native security tools with Pre-LLM Sanitization are particularly effective — they secure the code AND protect your data during the analysis.

Try the most AI-native security platform on the market.

Precogs AI: Agentic AI that detects, fixes, and protects. 98% precision, autonomous PR fixes, PII detection, Pre-LLM Sanitization, and full compliance automation. See the difference in your first scan.